Artificial Intelligence for Physiotherapy

HealthHack Sydney 2017

Over the last weekend, Coviu took part in the Sydney HealthHack event with the submission of an interesting problem : CAPTURING REHABILITATION THERAPY PROGRESS THROUGH ARTIFICIAL INTELLIGENCE. The team was so awesome and what was achieved blew our minds and apparently also the minds of the judges, because this project won the 2017 HealthHack in Sydney!

The challenge

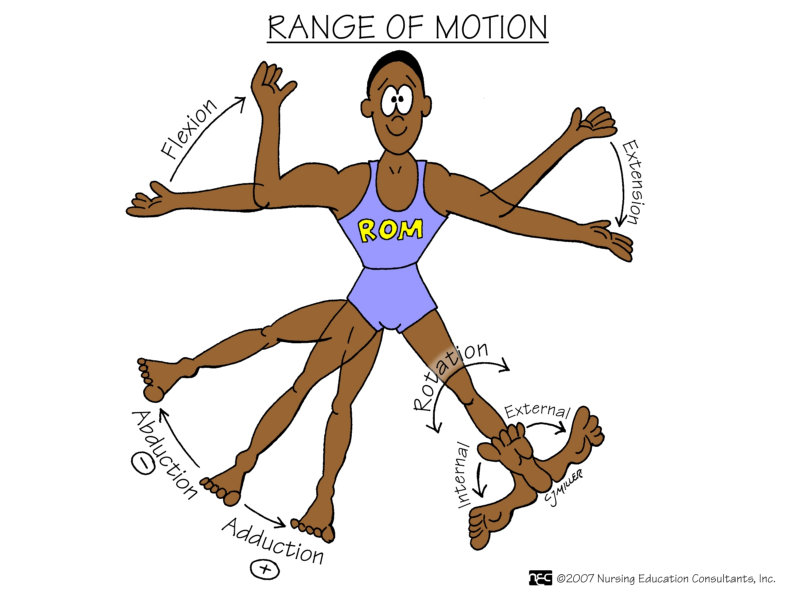

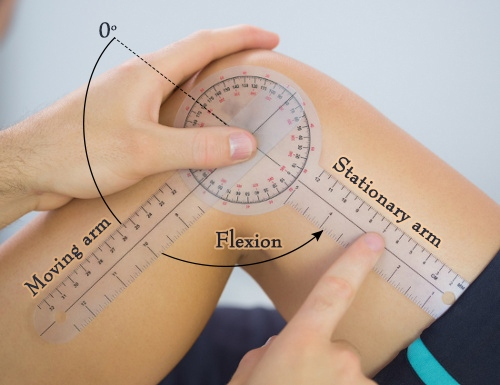

The challenge that we posed was to track the progress of Range of Motion (ROM) abilities of physiotherapy patients using Computer Vision. Range of Motion is tracked by physiotherapists by measuring the angles of the motion of limbs around a joint.

ROM may get limited through a surgery, an accident, a stroke or other causes. Reportedly, up to 70% of patients give up physiotherapy too early — often because they cannot see the progress. Automated tracking of ROM via a mobile app could help patients reach their physiotherapy goals.

Similarly, physiotherapists that provide therapy (e.g. via video consultation using Coviu) could profit from automated tracking of ROM between therapy sessions to more accurately, objectively and easily track patient progress and to get better documentation about patient progress.

Currently, the recommended approach for physiotherapists to measure the angles is the so-called goniometer, but its use is cumbersome and not always accurate since it interferes with the motion path of the patient.

[embed]https://www.youtube.com/watch?v=ZUF7tpkVAIY[/embed]

Another solution in use is a computerised system that requires patients to wear sensors. This is certainly not usable by patient at home.

[embed]https://www.youtube.com/watch?v=PrbmBMehYx0[/embed]

The solution

The proposed idea for this challenge was to use a video camera to capture a person’s movement and objectively calculate the angles between their limbs through Computer Vision, preferably in real-time. In addition, an automated progress report would be created.

This would help a physio become more objective in their work and do less paperwork at the same time. Ideally, the ROM analysis would be done in real-time during a live consultation, which could be held online.

Here are the slides that we used to present the challenge.

The team and its achievement

The team of 7 that got together to take on the challenge was amazing:

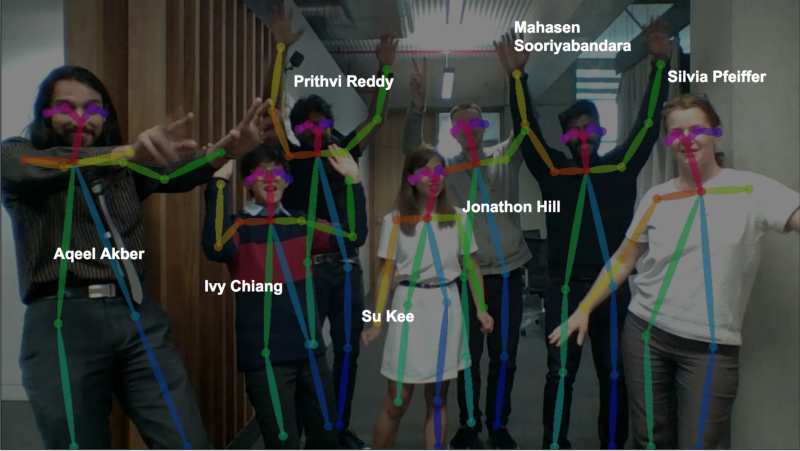

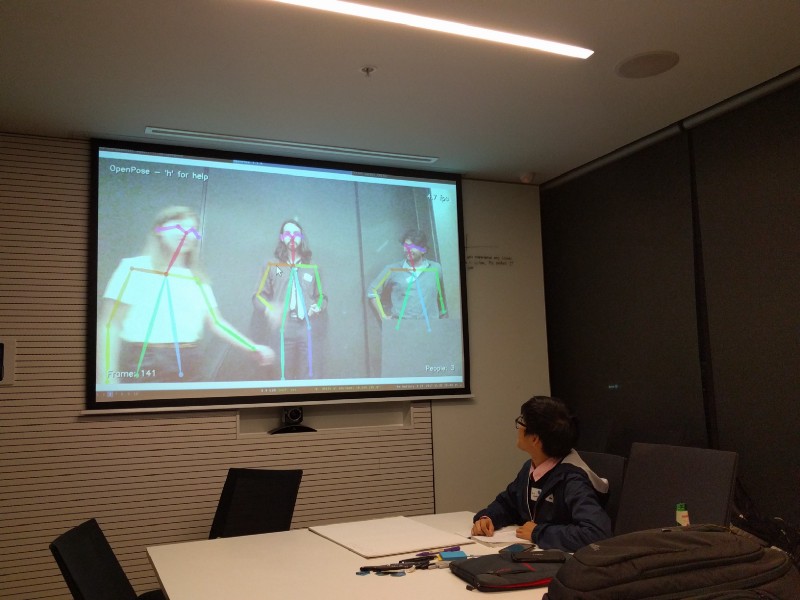

Aqeel, Prithvi & Mahasen came all the way from Canberra on a bus to attend HealthHack. Being PhD candidates in physics, they were looking for a challenging project and this was it! They even dug into the Computer Vision libraries during their bus trip from Canberra and made the “stick figures” work before arriving in Sydney!

Jono and Silvia focused on the Web development parts of the challenge.

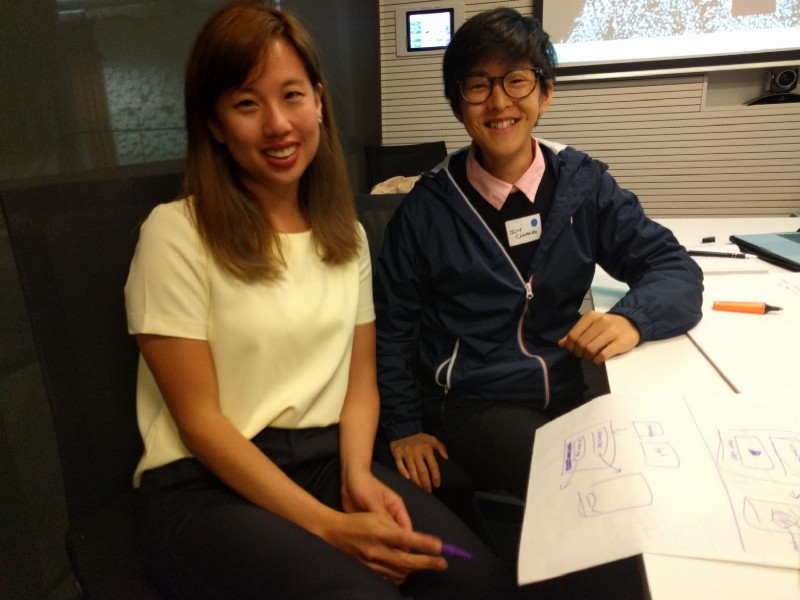

Ivy and Su joined as UI/UX designers with Su also providing a healthcare background.

And with that we had all the capabilities we needed. On top of this, every single person in the project came with a positive attitude, with the will to collaborate and to have fun — a perfect environment to achieve. In fact, Ivy, Aqeel, Prithvi & Mahasen all had previously won other Hackathons — what a star team!

We first sorted out the details of what we wanted to achieve on the Friday evening and could then focus on delivering on the second day. The enthusiasm by our visiting physicists was astounding — they worked through the night to bring up a python based Web service and the algorithm to calculate the angles. By Saturday midday, the first version of automated ROM calculation was working:

[embed]https://www.youtube.com/watch?v=6p6oaIISKdM[/embed]

At the same time, our UX team discussed how this would best be delivered to patients and physiotherapists and built a user story together with a whole set of wireframes for application interfaces, both for a stand-alone patient application as well as for a potential integration into Coviu. They wanted to create a design that would help the patient with routine and features to improve.

On Saturday by 5pm, when the pitches for the different projects were due, we had finalized not only the demo, but also put together a really awesome pitch deck.

The approach

For those interested in the technical details, the code is published in this GitHub repo: https://github.com/admiralakber/physio-rom

We used the CMU library openpose to calculate limbs and joints. Its capabilities are really quite impressive. The outcome of the calculation is a JSON file that contains all the positions of limbs and joints.

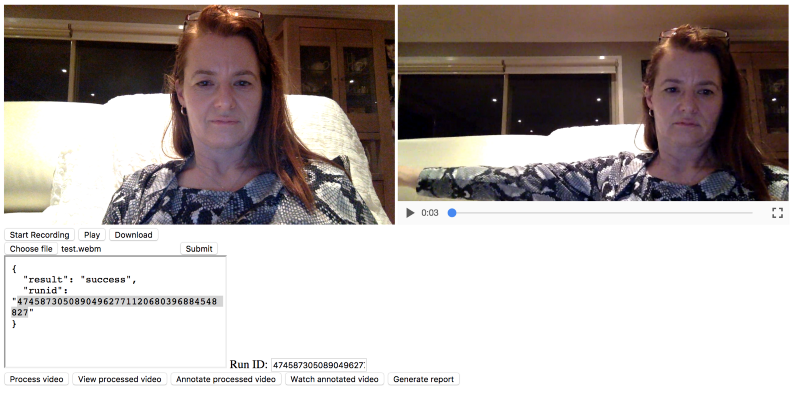

From the JSON file, the team calculated the angles and returned that as another JSON file that was rendered on top of the video.

To capture the video we developed a Web page that is using the new browser video APIS (getUserMedia and MediaRecorder) to capture the video from the camera and record it to a file. This code is based on Sam Dutton’s Media Recorder example.

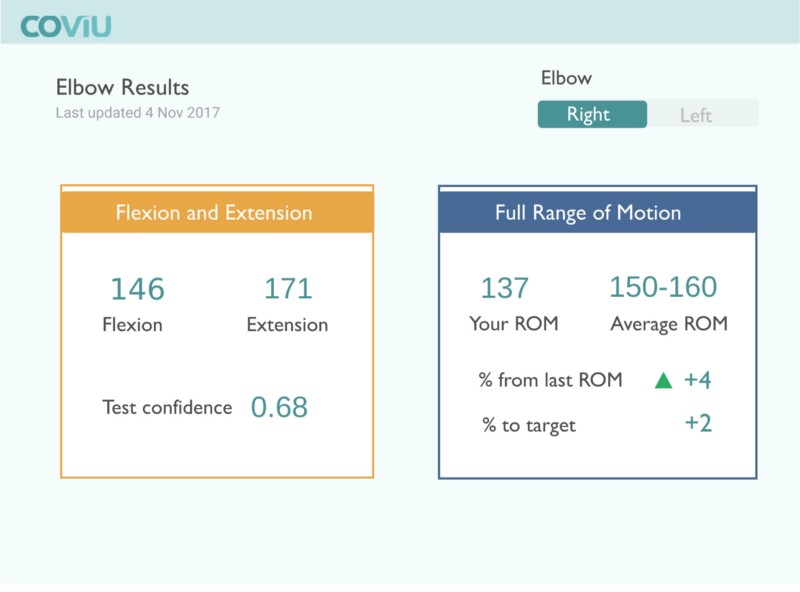

Finally, there was also an algorithm to analyse the results of the angles and aggregate in a report for the physiotherapist:

Here is the team’s final talk and demo:

https://youtu.be/BbRzcYr6Hcs

And so it came that after a successful pitch and demonstration, this team won the HealthHack 2017 challenge:

Next Steps

The project was so successful and the team had so much fun together that there was chatter about how we could continue to work together. There is certainly a lot to do before this is turned into an actual product, but all the key parts exist. What an amazing outcome — congratulations!

—

If you are a physiotherapist, we’d like to get your feedback about this technology and it’s potential. Please fill in this questionnaire.

—